Biography

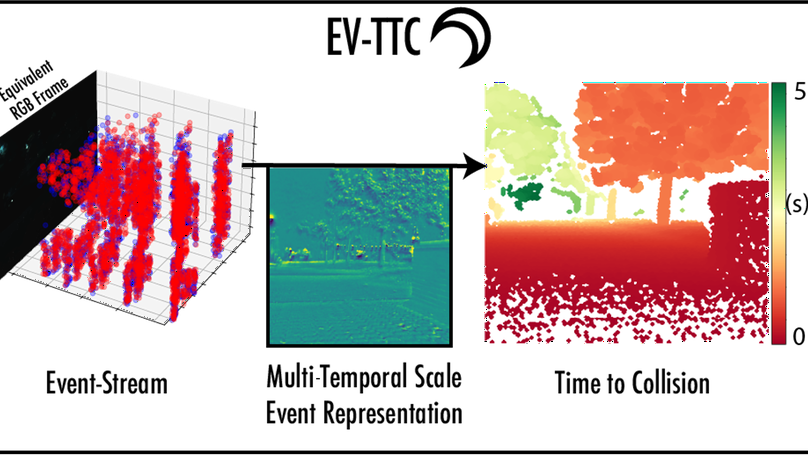

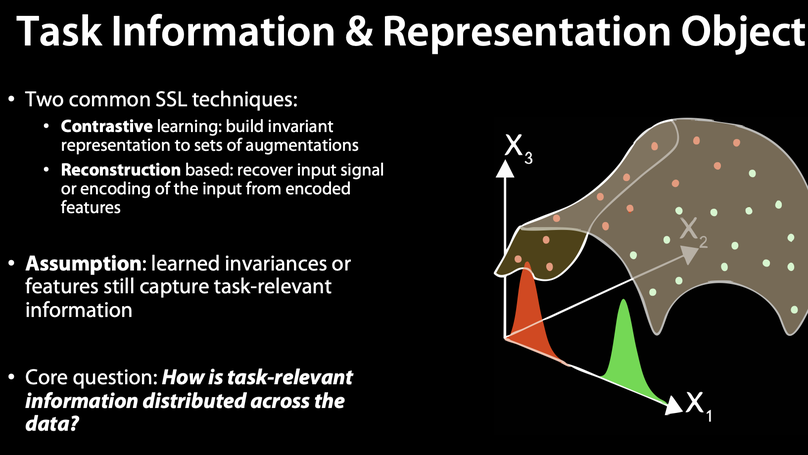

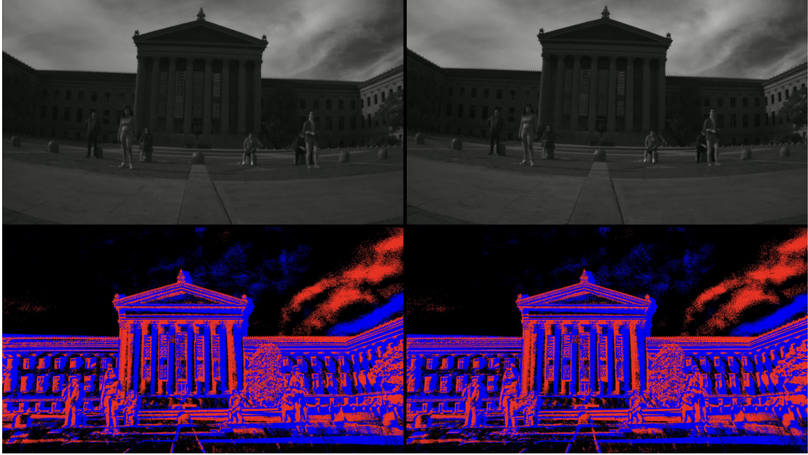

Hi, my name is Anthony Bisulco, and I am a PhD student in Electrical and Systems Engineering at the University of Pennsylvania, working on event-based vision and representation learning. Previously, I was a machine learning research engineer at Samsung Research America in the Samsung Artificial Intelligence Center (SAIC) NY. This website is devoted to my various personal projects and blog. If you have any feedback on the work in this portfolio, please feel free to contact me at abisulco@seas.upenn.edu.

Download my resumé.

Interests

- Computer Vision

- Machine Learning

- Computational Neuroscience

- Robotics

- Hardware

- Imaging

Education

Candidate for a PhD in Electrical and Systems Engineering

University of Pennsylvania

MEng in Electrical and Computer Engineering, 2019

Cornell University

BSc in Electrical and Computer Engineering, 2018

Northeastern University